(Semi)-automatic dataset preparation with youtube-dl and

YOLOv3

Andrea Ranieri - CNR-IMATI

Some warm up with a question

- What is this?

- DSS accolites are not allowed to answer

- DSS accolites are not allowed to answer

Some warm up with a question

- It's Mona Lisa!

- I'm surprised you didn't recognize her in all her 32x32 grayscale ASCII beauty

- I'm surprised you didn't recognize her in all her 32x32 grayscale ASCII beauty

Enter the CNNs

- We're not designed to work with numbers

- We're designed to work with features

- by billion years of evolution

- by decades of learning through a continuous stream of (stereo) video data (and much more)

- So we (as humans, not me sadly) invented the Convolutional Neural Networks

Credits: https://www.kaggle.com/keras/resnet50

Short adversarial break #1

- Everything ok so far?

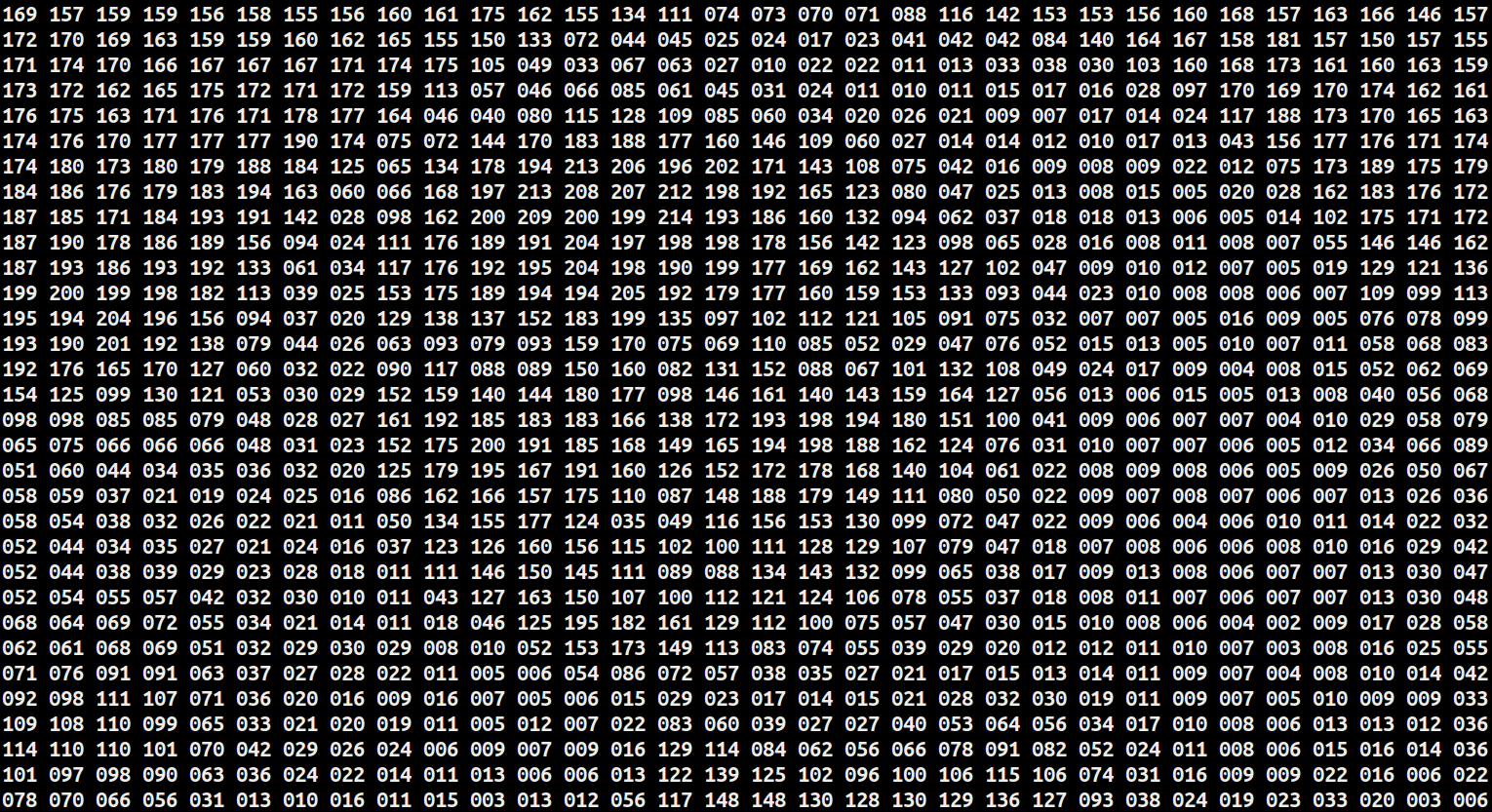

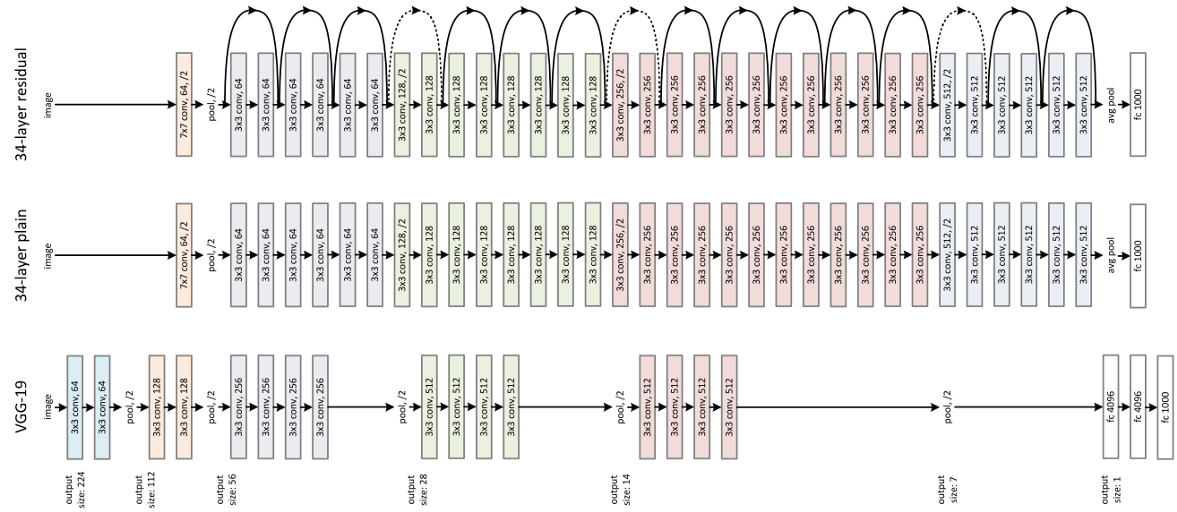

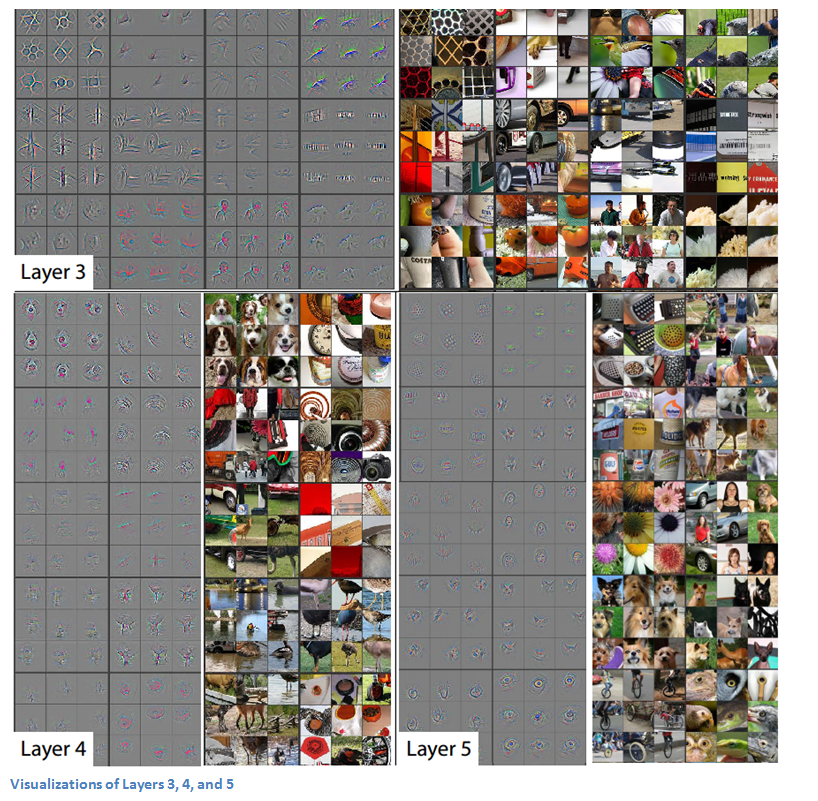

CNNs in two slides

- Mostly huge feature extractors

- 100+ layers of convolutional filters

- each convolutional layer "automatically" converges towards the optimum during training, according to a loss function

- 1 (or even zero) fully connected layers

- to actually classify the image based on it's features

- 1 output layer with (e.g. for classification) n neurons for n classes that fire in the range (0, 1)

Credits: https://www.kdnuggets.com/2016/09/9-key-deep-learning-papers-explained.html

- 100+ layers of convolutional filters

CNNs in two slides

- The output layer always fires in the range (0, 1) or (-1, 1)

- it's up to the data scientist to give a meaning to the output

- and make it converge to the optimum chosing the architecture, the loss function and the input data with its labels

Credits: https://www.kdnuggets.com/2016/09/9-key-deep-learning-papers-explained.html

Short adversarial break #2

- Everything ok so far?

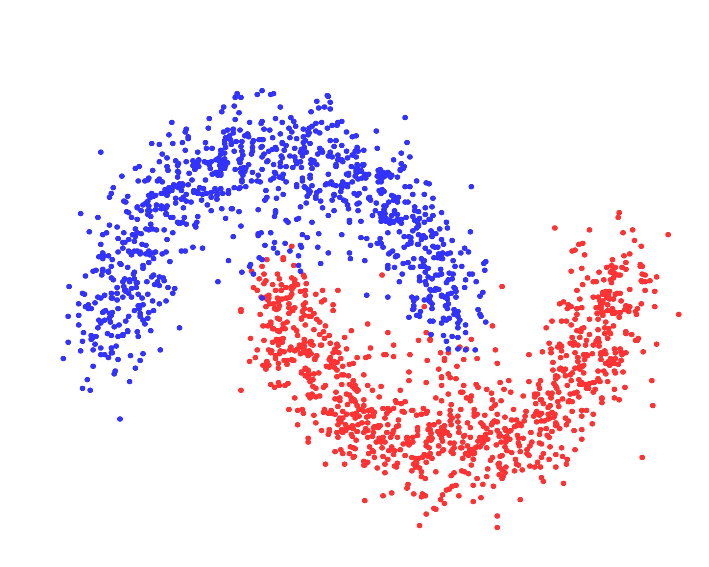

One step back: ML vs. Programming

- Definitions:

- “Machine Learning is a field of study that gives computers the ability to learn without being explicitly programmed.”

- “Deep learning is a collection of Machine Learning algorithms used to model high-level abstractions in data through the use of model architectures, which are composed of multiple nonlinear transformations.”

Credits: https://www.ml.uni-saarland.de/code/pSpectralClustering/pSpectralClustering.htm

Data: the secret sauce of DL

- Now we have three main "high level knobs" to play with:

- the architecture (or model) of our CNN

- how our network crunches the features

- the loss function

- what we define "success" or "failure" during optimization

- the input data

- the architecture (or model) of our CNN

- So what can we do if we find a DL problem that we can't solve?

- simple: get more data!

Short adversarial break #3

- Everything ok so far?

Enter YOLO

- YOLO (You Only Look Once) is a state-of-the-art real time object detection system

- it's latest iteration (YOLOv3, 2018) can recognize up to 80 classes (person, bicycle, car, motorbike, aeroplane, etc.)

- but it can be retrained to detect custom classes

- it's a CNN that does more than simple classification

- it has been trained from start to end to output bounding boxes and class names of the detected objecs

- it's latest iteration (YOLOv3, 2018) can recognize up to 80 classes (person, bicycle, car, motorbike, aeroplane, etc.)

DL pipelines

-

Youtube is an online platform with billions of hours of video

- if we could create a pipeline with Youtube-dl and YOLO...

- we could have something close to an infinite amount of images!

- if we could create a pipeline with Youtube-dl and YOLO...

-

Time for a live demo... with dogs 🐶

Thanks for your attention

- Questions?